Your BS Detector for Supplement Claims

"Science-backed" is plastered on every supplement bottle. But what does it actually mean — and how can you verify it yourself? Here's a framework.

👋 Hola! Welcome to Out of Singapore. I’m Shan, and I’m building Xandro Lab, a longevity science brand. Every week I share raw notes on building, marketing, and navigating the messy world of health and performance.

Last week, during a feedback session, one of our livestreamers asked a pointed question about LPC Neuro: the DHA dosage looks low compared to regular fish oil, yet it’s one of the priciest on the market. What’s the justification?

Rather than just defend the product, I wanted to use this as a starting point for something bigger — what do “science-backed” and “evidence-based” actually mean in supplements? And how can you, as a consumer, cut through the marketing?

Consider this your insider’s guide.

In this post:

Why “science-backed” is legally meaningless

Three tiers of evidence in supplements

Why the form of an ingredient matters more than you think

Dosage theatre: studied vs practical

Proprietary blends vs transparent formulas

When science meets regulatory reality

How to fact-check any supplement claim yourself

1. “Science-Backed” Has No Legal Definition

Walk through any supplement aisle and you’ll see “clinically proven,” “research-backed,” and “scientifically formulated” on every other bottle. These phrases sound authoritative. They’re also essentially meaningless from a regulatory standpoint.

Unlike pharmaceutical drugs, supplements don’t require clinical trials proving efficacy before hitting the market. Companies can reference research that’s tangentially related to their product and call it “science-backed.” The research might be on a different form of the ingredient, a different dose, a different population, or even a different compound entirely.

This isn’t always deception — sometimes it’s the best available evidence. But consumers deserve to understand the difference between “we conducted a clinical trial on this exact product” and “there’s a study on one of our ingredients.”

2. Three Tiers of Evidence

When evaluating any supplement claim, it helps to know which tier of evidence supports it:

Tier 1: Direct product research. Clinical trials conducted on the specific formulation at the dose being sold. This is the gold standard but rare — trials are expensive and products aren’t patent-protected like drugs.

Tier 2: Ingredient-level research. Published studies on individual ingredients at specific doses. Most legitimate supplement claims fall here. The key questions: Is the form the same? Is the dose the same? Was the study in humans?

Tier 3: Mechanistic or preclinical evidence. Cell studies, animal research, or theoretical mechanisms explaining why something should work. This is where most “emerging” ingredients sit. It’s not worthless — it’s how science progresses — but it’s not proof of efficacy in humans.

The problem arises when marketing presents Tier 3 evidence with Tier 1 confidence.

3. Forms Matter More Than You Think

Here’s where it gets interesting. The same compound can exist in multiple forms with very different research profiles.

Magnesium is a well-studied mineral for sleep, muscle function, and bone health. Historically, most large-scale outcome studies used magnesium oxide and magnesium citrate — they were cheaper and more widely available when the trials were conducted. Today, magnesium glycinate (bisglycinate) has become the popular consumer choice.

Is glycinate under-researched? Not exactly. It has solid bioavailability data showing it’s well-absorbed and gentler on the stomach than oxide. Recent double-blind trials show modest but real effects on sleep quality. The research base is growing. But if you’re comparing volume of published outcome studies, oxide and citrate still dominate — not because they’re superior, but because they have a head start.

Then there’s magnesium L-threonate — marketed specifically for brain health. It’s patent-protected by one company, and virtually all published studies on it come from that same company. This isn’t unusual in the supplement world. It shows how commercial interests shape what gets researched and how.

CoQ10 exists as ubiquinone (oxidised form) and ubiquinol (reduced, active form). Ubiquinol is marketed as superior because it’s “body-ready,” but the research base is mixed on whether this matters for most people.

NAD+ precursors have been studied as nicotinamide, nicotinamide riboside (NR), nicotinamide mononucleotide (NMN), niacin, and plain vitamin B3. Each has different absorption, metabolism, and research profiles. When someone says “NAD+ boosters are proven,” the immediate question is: which form, at what dose?

The point isn’t that newer forms are scams — some may genuinely be better. The point is that research on Form A doesn’t automatically validate Form B.

4. Dosage Theatre

Creatine monohydrate is one of the most well-studied supplements in existence. The research spans doses from 3 grams (maintenance) to 20 grams per day (loading phase).

But here’s what the labels don’t tell you: your body already makes about 1 gram of creatine daily, and if you eat meat, you’re getting another 1-2 grams from food. Red meat and fish contain roughly 4-5 grams of creatine per kilogram of raw meat. The catch? Cooking degrades creatine significantly — a well-done steak may have lost most of it.

So how much meat would you need to eat to hit the 5-gram daily dose used in studies? About 1 kilogram of lightly cooked beef. Every day. The 20-gram loading doses some protocols recommend? That would require 4-5 kilograms of meat daily — obviously absurd.

This is why creatine supplementation makes sense for athletes and heavy trainers: food simply can’t deliver study-level doses. But for the average person eating a balanced omnivorous diet, you’re already getting 2-3 grams daily between endogenous production and food. The question becomes: do you actually need to supplement to 5 grams, or is that dose based on research designed to show maximum effect rather than practical benefit?

This is dosage theatre — where labels and protocols advertise amounts that sound impressive but may not reflect what typical consumers actually need.

When Bioavailability Flips the Dosage Script

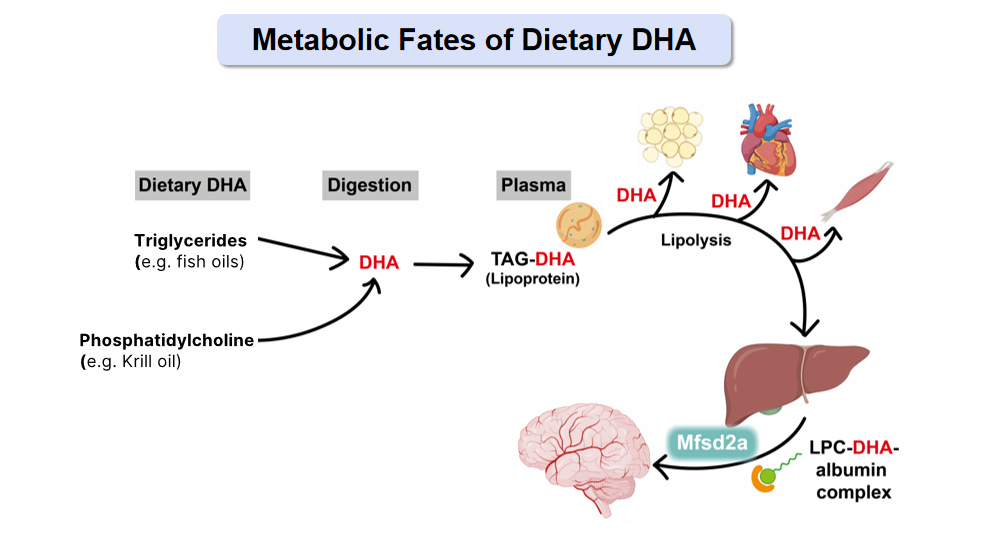

Sometimes the “low dose” product is actually delivering more of what matters. This brings us back to the question that sparked this post: why does LPC Neuro have such a low DHA dose compared to regular fish oil?

Standard fish oil supplements compete on milligram counts — 1000mg, 2000mg, triple strength. The assumption is intuitive: more is better. But this ignores a fundamental question: how much of what you swallow actually reaches the target tissue?

Traditional fish oil delivers DHA in triglyceride form. Your digestive system breaks it down, repackages it, and distributes it primarily to adipose tissue (fat), heart, and liver. The brain? It has a specialised transport system — a protein called Mfsd2a discovered in 2014 — that only accepts DHA attached to a specific carrier molecule called lysophosphatidylcholine (LPC).

Standard fish oil barely produces this form. Your liver can convert some, but the process is inefficient, especially as we age or if we carry certain genetic variants like APOE4.

This explains a puzzle that’s bothered researchers for decades: populations that eat fish regularly show better cognitive outcomes, but fish oil supplement trials often fail to replicate these benefits. The DHA in whole fish exists partially in phospholipid forms that generate LPC-DHA during digestion. Concentrated fish oil is almost entirely triglyceride-bound — the form your brain struggles to access.

When you deliver DHA in the LPC form directly, animal studies show meaningful brain uptake at doses that would seem trivially small compared to conventional fish oil recommendations. You’re not compensating for inefficiency; you’re bypassing it.

Is the human clinical evidence conclusive? Not yet — those trials are still building. But the mechanism is well-established, and it illustrates a broader point: milligrams on a label tell you what’s in the bottle, not what reaches your cells.

I wrote a deeper dive on why traditional omega-3 supplements have underdelivered on brain health promises here:

5. The Blend Question

You’ll sometimes hear that “blends don’t have research behind them.” This is partially true but oversimplified.

Some ingredient combinations are genuinely well-studied. Caffeine and L-theanine together have solid research — the theanine smooths caffeine’s jittery edge while preserving alertness. Caffeine and taurine combinations appear repeatedly in energy drink research. These aren’t random pairings.

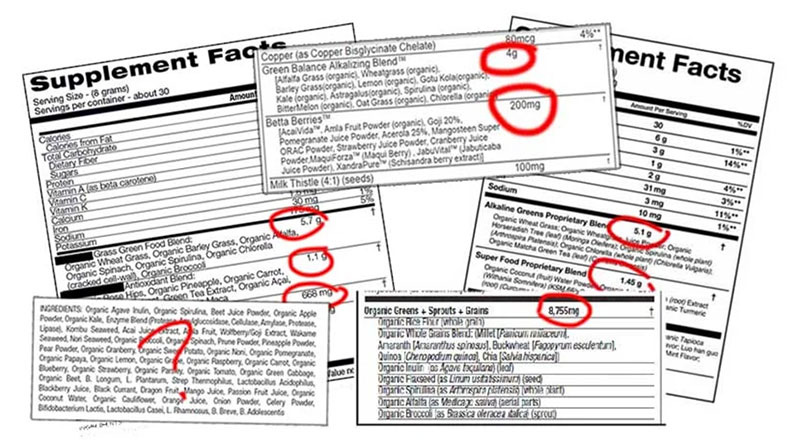

The real problem is proprietary blends — formulations that list a combined weight but hide individual ingredient amounts. When a label says “Energy Matrix: 3000mg” followed by eight ingredients, you have no idea whether any single ingredient is present at an effective dose. The first ingredient might be 2900mg of cheap filler.

Any formula that fully discloses individual quantities — even if the specific combination hasn’t been clinically tested — gives you enough information to cross-reference against published research. That transparency matters more than whether someone ran a trial on the exact blend.

![AG1 Review | Everything you need to know about AG1! [2026] AG1 Review | Everything you need to know about AG1! [2026]](https://substackcdn.com/image/fetch/$s_!fGf1!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F1d3ec25c-52e8-4bee-b491-99eb6de3d7c3_3803x2665.jpeg)

At Xandro, Protocol X is technically a blend. But we disclose every ingredient amount. And we’re currently running a human study in Singapore comparing it head-to-head against pure NMN for NAD+ outcomes — the first study of its kind here. That’s what building real evidence looks like: not just citing ingredient studies, but testing your actual formulation.

6. When Science Meets Regulatory Reality

Here’s something most consumers don’t realise: what’s “science-backed” in one country may be illegal in another. Regulatory bodies have their own interpretations, and they don’t always align with the research.

TMG (trimethylglycine) is a well-established methylation compound. Betaine Anhydrous — the common supplemental form — is not approved for sale in Malaysia, while Betaine and Betaine Hydrochloride are permitted. Same compound family, different regulatory status.

Methylated B12 is considered the more bioavailable form of vitamin B12. But in Korea, methylcobalamin is restricted to pharmaceutical products — it can’t be used in dietary supplements. When we manufactured our methylated multivitamin in Korea, we had to use regular B12 instead. This year, we’ve decided to focus the product on Singapore only and update the formula with methylated B12. Such are the realities of ingredient science meeting regulatory fragmentation.

NAC (N-acetyl cysteine) has a complicated status even in the US. The FDA technically excludes it from the dietary supplement definition because it was approved as a drug first (in 1963). But they now exercise “enforcement discretion” — effectively allowing sales while the regulatory status remains unresolved. It’s been sold as a supplement for over 30 years.

Berberine in Singapore was historically restricted under the Poisons Act due to concerns about jaundice in G6PD-deficient infants. Since 2016, HSA has permitted Chinese herbs containing berberine, with Chinese Proprietary Medicines allowed since 2013. But it’s still not freely available as a general health supplement ingredient.

The lesson: “science-backed” often conflicts with on-ground regulatory realities, supply chain constraints, and regional health authority interpretations. A well-researched ingredient might simply be unavailable in your market.

7. How to Fact-Check Any Claim Yourself

The good news: you have better tools than ever to verify supplement marketing. AI assistants can now query research databases directly. You’re not limited to reading brand blogs that cherry-pick studies.

Step 1: Identify the specific claim. “Supports brain health” is too vague. Look for measurable claims: “increases NAD+ levels,” “improves cognitive function scores,” “reduces inflammatory markers.”

Step 2: Find the cited research. Good companies link to studies. If they don’t, ask. If they can’t provide them, that’s your answer. (simple way would be go the white paper on the product).

Step 3: Check study relevance. Is this the same form of the ingredient? Is the dose similar? Were subjects human? Who funded it?

Step 4: Look for replication. One study isn’t proof. Multiple independent studies showing consistent results is more convincing.

Step 5: Use AI tools. Ask ChatGPT, Claude, Google AI or Perplexity: “What does the research say about [ingredient] at [dose] for [outcome]?” These tools draw majorly from published literature, not marketing content. They’ll surface limitations and contradictions that brand materials omit. You can question the results cited by the AI tools, asking it to verify claims rather just giving an answer.

This is genuinely new. A few years ago, your options were Google (which surfaced brand blogs and affiliate content) or PubMed (which requires scientific literacy to navigate). Now you can ask plain-language questions and get synthesised answers from research databases.

Try it: “What dose of NMN has been studied in human trials?” or “Does magnesium glycinate have more research than magnesium citrate?” You’ll get more nuanced answers than any product page will give you.

8. Use This on Us Too

I wrote this as an insider sharing how the industry works — not as someone claiming exemption from scrutiny.

Everything in this post applies to Xandro’s products. Ask us which tier of evidence supports our claims. Ask us why we chose specific forms and doses. Ask us what isn’t yet proven.

If we can’t answer clearly, that tells you something. If we can, that tells you something too.

For a deeper dive into why traditional omega-3 supplements have failed to deliver on brain health promises — and what the emerging LPC-DHA research suggests — I wrote about it in detail here: Spilling secrets on omega supplements.

The goal isn’t to find supplements with “proven” benefits in some final sense. Science doesn’t work that way. The goal is to make informed decisions based on the best available evidence — while remaining open to updating as new data emerges.

The tools to verify are in your hands. Use them.

Questions about evaluating supplement claims? Drop them in the comments — happy to dig into the evidence together.

See you next Sunday!

- Shan